OSiRIS At Supercomputing 2018

The International Conference for High Performance Computing, Networking, Storage, and Analysis

November 11–16, 2018

Kay Bailey Hutchison Convention Center, Dallas, Texas

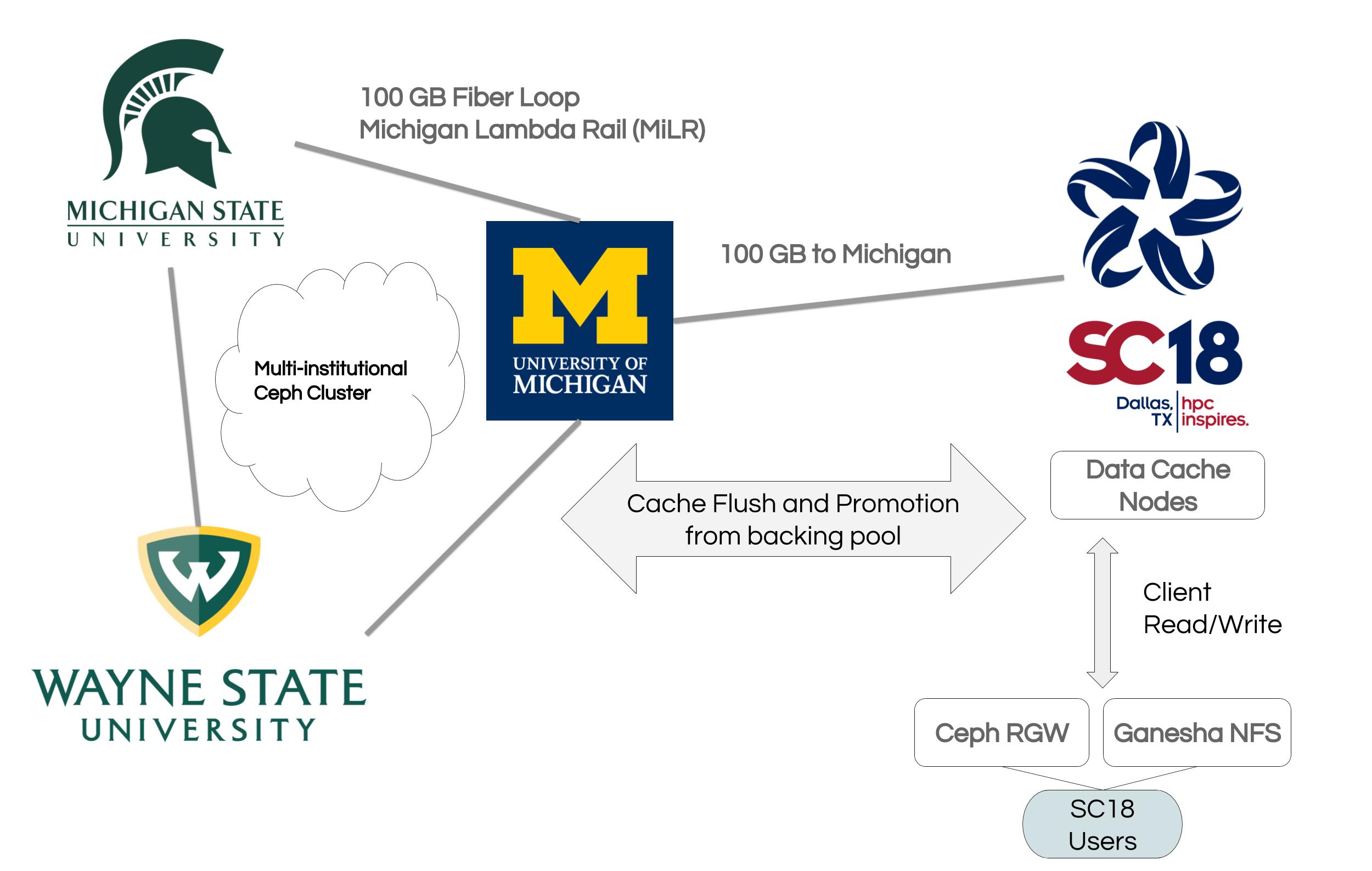

Members of the OSiRIS team traveled to SC18 to setup in the University of Michigan and Michigan State University combined booth!

We setup up a rack of equipment designated for use by OSiRIS, SLATE, and AGLT2 demos at SC. The rack was shipped as a unit from Michigan and was waiting for us to plug it in and set up when we got to the conference.

Our demonstration encompassed our storage features, advanced networking, identity management/onboarding, and included a collaboration with SLATE infrastructure utilizing OSiRIS storage.

-

Storage Features - caching: Typical Ceph storage clusters are heavily affected by network latency between components and clients. As such a client accessing OSiRIS storage in Michigan from the SC showroom would not see similar performance to our more proximate users. OSiRIS will deploy dedicated storage hardware for a Ceph cache-tier at SC18 to show that it is possible to achieve reasonable performance even though the backing data pool components are geographically quite far away. The SC18 collaboration with SLATE also leveraged this configuration as a 50TB RBD for their XCache container.

-

Storage Features - primary OSD: Taking another angle on optimizing around network latency, we also experimented with setting primary OSD at SC18 for certain storage pools. Our tests compare the benefits and drawbacks of this approach for clients with low latency to the primary OSD vs those farther away and also compare to the 'default' Ceph setting of randomly allocated primary OSD.

-

Identity Management and SC18 Test Sandbox: OSiRIS virtual organizations and the people within those organizations are managed by Internet2 COmanage and a collection of plugins we’ve written to tie together with Ceph storage. For SC18 we spawned a virtual organization for the show to demonstrate self-service onboarding and credential management. We then made this storage sandbox available to SCInet clients on the show floor via NFS mount or RGW services. Our provisioning infrastructure was also involved in the collaboration with SLATE as far as being used to provision storage resources for their virtual organization as we would for any dedicated VO.

-

SLATE Collaboration: SLATE (Services Layer At The Edge) aims to provide a platform for deploying scientific services across national and international infrastructures. At SC18 they demonstrated their containerized Xcache service using storage (RBD) hosted by OSiRIS using our Ceph cache tier at SC18.

Thanks to everyone on the OSiRIS team at University of Michigan, Wayne State University, Indiana University, and Michigan State University for helping to make the conference a great success!

We appreciate the support of Nick Grundler and Dan Eklund from the U-M ITS team. Their hard work before and during the conference made sure we had stable, fast network paths to the conference and on the showroom floor.

We appreciate the support of Nick Grundler and Dan Eklund from the U-M ITS team. Their hard work before and during the conference made sure we had stable, fast network paths to the conference and on the showroom floor.

Special thanks to Wenjing Wu and Troy Morgan from U-M Physics/AGLT2 for assistance with equipment preparation before and after the conference.

Special thanks to Wenjing Wu and Troy Morgan from U-M Physics/AGLT2 for assistance with equipment preparation before and after the conference.

Thanks to Merit Networks and Internet2 for assistance providing 100Gb network paths from Michigan to the conference.

Thanks to Juniper and Adva for providing network equipment.

We would also like to acknowledge the hard-working team that built the SCinet infrastructure to support local and wide-area networks at the conference.