S3

The S3 protocol is best known from Amazon S3 services. This is a well established ecosystem with many compatible clients. S3 is based on simple http operations such as GET / PUT / HEAD / DELETE.

The OSiRIS S3 endpoint is:

https://rgw.osris.org

This is actually a DNS pointer to S3 gateways located at all of the OSiRIS sites. It is best to use this URL.

Some of our users may only be able to reach the S3 endpoint located on their campus (typically private compute cluster users).

Campus specific endpoints are:

https://rgw-um.osris.org

https://rgw-wsu.osris.org

https://rgw-msu.osris.org

Please do not use directly the hostnames or IP addresses that those pointers resolve to. You will have SSL certificate verification issues.

Options for using S3

There are a wide variety of command line tools, GUI tools, and libraries for using S3 storage. Access to OSiRIS S3 is also available via Globus web interface.

Some tools we can suggest:

- For Python scripts use the Boto module

- To mount S3 buckets like a filesystem use s3fs-fuse

- For command line usage we have information on s3cmd and awscli

- One popular GUI client is CyberDuck

Please note that with any client you will have to specify one of the OSiRIS S3 URLS noted above when creating your S3 connections. It is common that clients default to Amazon services without asking for URL configuration.

Obtaining S3 Credentials

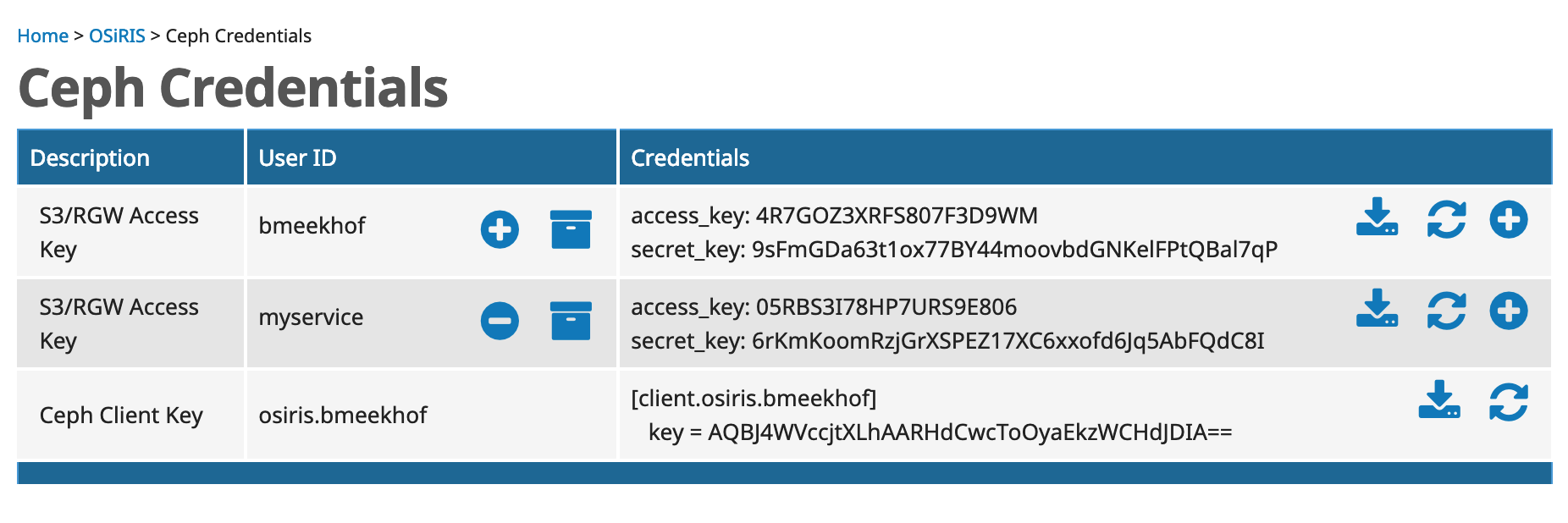

Once you have been enrolled into OSiRIS you can retrieve credentials for S3 access from comanage

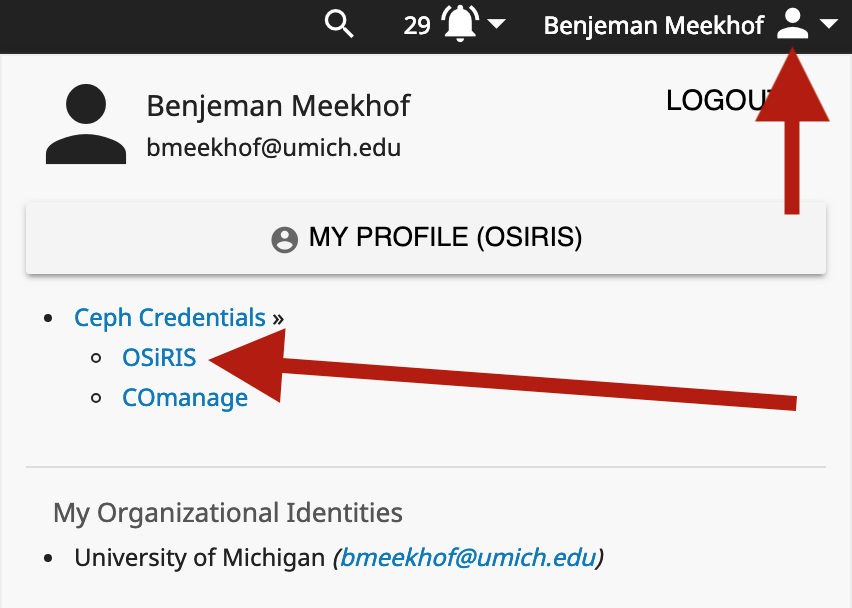

From the COmanage ‘person menu’ on the upper-right part of the screen look for ‘Ceph Credentials’:

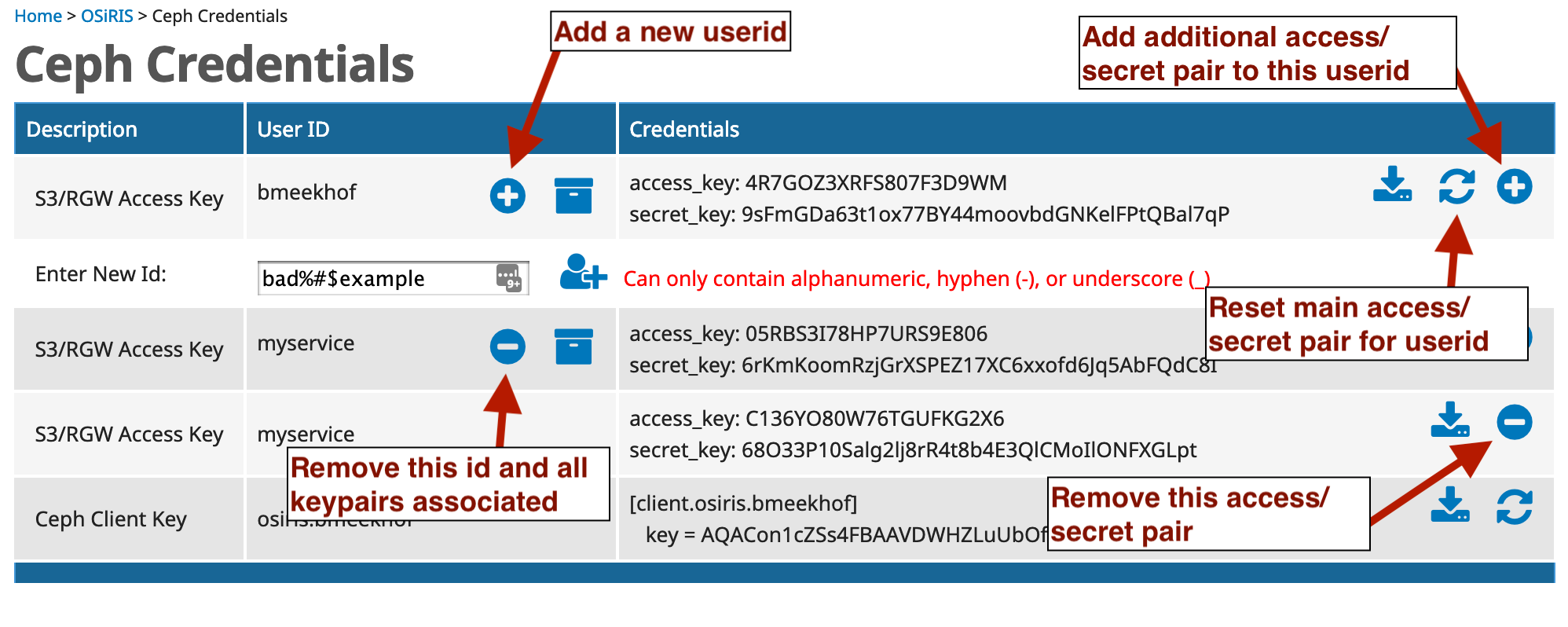

Your available S3 credentials will be listed. From here you can regenerate new ones, add additional access/secret pairs, or add additional userid.

The access_key and secret_key pair are given to your S3 client.

For example, in python boto3 the access_key goes into the credential argument aws_access_key_id.

The secret_key goes into aws_secret_access_key. This argument might be specified in the boto.client function or a credential file on your host.

The download icon next to each access keypair will download the credentials in an appropriate format to be saved as ~/.aws/config for use by the Boto client.

More information for Boto is in their documentation.

Bucket Naming

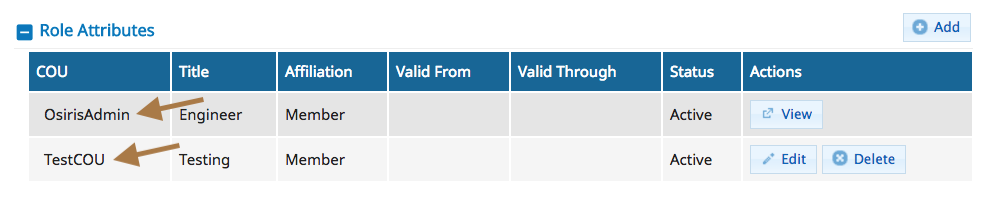

All buckets MUST be named by prefixing with the name of your virtual org (COU). You can find your COU under the ‘My Profile’ page. Look for ‘Role Attributes’.

The example user here belongs to 3 COU. Any buckets must be named with a prefix matching the appropriate COU or the operation will be rejected. For example, for this person an appropriate name would be ‘osirisadmin-mynewbucket’ or ‘testcou-mynewbucket’ depending on what org is relevant for the data being saved. You may only belong to one COU.

Bucket Data Placement

If you only belong to one Virtual Organization (COU) then you can skip this section.

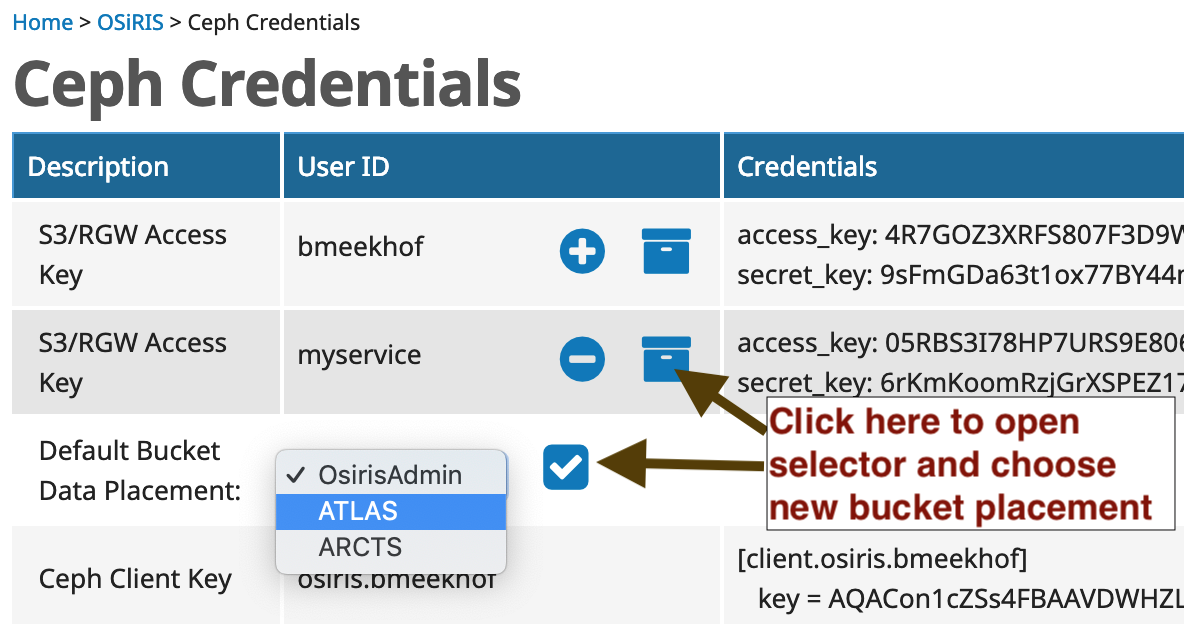

If you belong to multiple COU then you have the option to set your userid(s) to place data buckets with any of the COU you belong to. The placement will default to the first one you joined. The setting only applies to NEW data buckets. Existing buckets cannot be moved but you can create a new bucket with correct placement and move data to it.

Because the setting applies at the bucket level to new buckets, you can change the setting, create a bucket, and then change it to another default. Any data written to the bucket will be placed in the data pool belonging to the COU which was default when it was created. If you are unsure you can ask us the placement for any bucket or you can query it with the S3 API as well.

This setting only determines the Default bucket placement if none is specified. You can specify it yourself when creating the bucket. For example, in Python boto (v3):

response = client.create_bucket(Bucket='mycou-newbucket',

CreateBucketConfiguration={ 'LocationConstraint': ':mycou' })

Note that OSiRIS placement locations are preceded by a colon (:) - this is a requirement imposed by how S3 interprets location constraints (it is technically a storage policy, not a region). Amazon regions such as ‘us-west-1’ are not valid for OSiRIS.

The create bucket command is documented in the Python Boto docs

S3 Bucket ACL

When you create new buckets they will be associated with your identity. Other users cannot see them. You must set bucket ACLs to allow other users.

S3 users are identified by a userid. Though you can create others every OSiRIS person has a userid matching the default OSiRIS userid to start (which is the same as used for shell access or in CephFS context). Your userid are listed on the credentials page along with any associated access/secret keypairs.

Python Boto3 Example:

response = client.create_bucket(Bucket='mycou-newbucket',

GrantRead='userid')

# or using a 'canned' ACL

response = client.create_bucket(Bucket='mycou-newbucket',

ACL='authenticated-read')

# or apply to an existing bucket

bucket_acl = s3.BucketAcl('mycou-bucket')

response = bucket_acl.put(GrantRead='userid')

Userid is as listed here on the Ceph Credentials page:

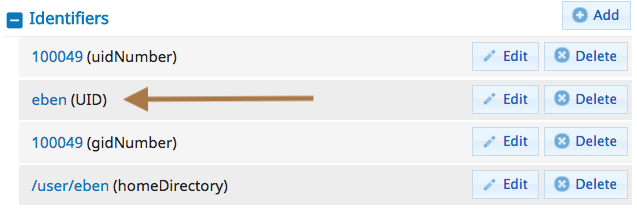

If you are marked as an ‘Admin’ for your COU the people in your COU will also be visible under the ‘People’ menu in COmanage. If you select one to edit you can see their primary UID under Identifiers. Everyone has an S3 userid matching this UID:

More specifics on using Boto for bucket ACL are in the Boto 3 Docs or in examples below.

Encrypting data

OSiRIS S3 supports Server Side Encryption with Client keys. In other words, you generate an encryption key and provide it with your upload and our server encrypts the data. They key is not kept on the server.

More information: Using SSE-C

Information Resources

More information about the Ceph S3 API and available clients is available at ceph.com.