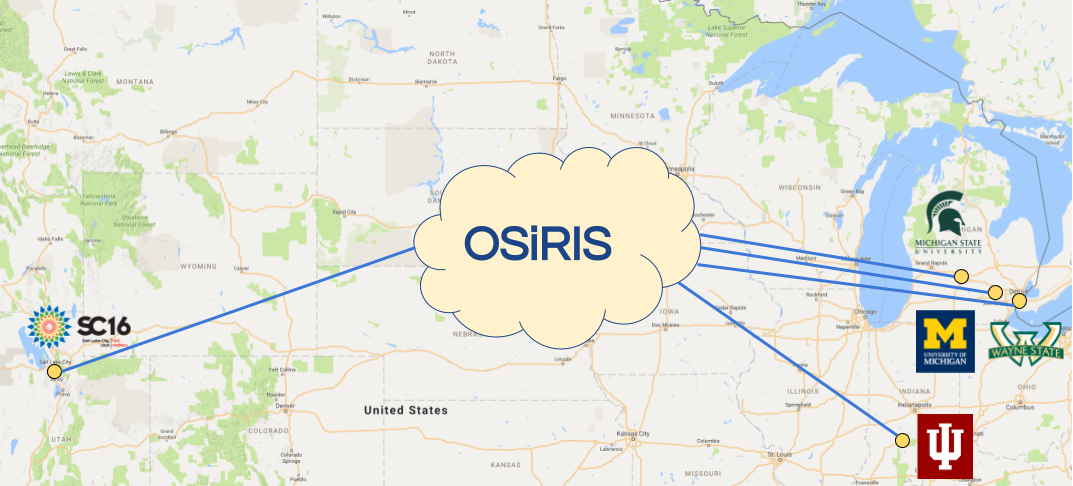

OSiRIS At Supercomputing 2016

The OSiRIS project was featured in the University of Michigan Advanced Research Computing booth at Supercomputing in Salt Lake City this year.

For the week of the conference, November 14 - 18, OSiRIS deployed a 4th storage site in Salt Lake City at the Salt Palace convention center.

We went to Supercomputing with these goals in mind:

Demonstrate our ability to quickly deploy additional OSiRIS sites. At our booth we presented a talk including a live demonstration of spinning up a new storage block (article and slides).

Demonstrate and test the usability of OSiRIS with a higher latency site involved (confirming our formal test results).

Test and gather data on using Ceph cache tiers to help overcome latency issues. This test features LIQID NVMe drives installed in a pair of 100Gb capable hosts from 2CRSI in our rack at SC (article).

Demonstrate live data movement with the Data Logistics Toolkit created at Indiana University. This demo showcased the movement of USGS earthsat data from capture to storage not only in the main OSiRIS Ceph cluster but also a dynamic OSiRIS Ceph cluster deployment built at Cloudlab (article).

At the ‘CEPH in HPC Environments’ BOF we were invited to give a brief overview of the project. Slides from that talk are available here on our website as well as posted here on the BOF overview. You may also be interested in talks from last year’s BOF though OSiRIS did not yet exist to attend.

To cap off the conference we participated in breaking the SCinet record for most data transferred via the SC16 network links (article)

Thanks to everyone on the OSiRIS team at University of Michigan, Wayne State University, Indiana University, and Michigan State University for making the conference a great success!

We appreciate the support of Dan Kirkland and Dan Eklund from the UM ITS team. Their hard work before and during the conference made sure we had stable, fast network paths to the conference and on the showroom floor.

We appreciate the support of Dan Kirkland and Dan Eklund from the UM ITS team. Their hard work before and during the conference made sure we had stable, fast network paths to the conference and on the showroom floor.

Special thanks to Bob Ball, Victor Yang, and Anthony Orians from UM Physics/AGLT2 for assistance with equipment preparation before and after the conference.

Thanks to Zayo and CenturyLink for providing 100Gb network paths from Michigan to the conference.

Thanks to Juniper and Adva for providing network equipment.

We would also like to acknowledge the hard-working team that built the SCinet infrastructure to support local and wide-area networks at the conference.

Tags